What is Docker and why should you use it?

Docker is an open-source platform that allows software developers and IT administrators to easily develop, package, and distribute applications in containers. But what exactly is a Docker container? And why do we need Docker? In this blog, we’ll walk you through the key aspects of Docker and how it has fundamentally changed software development and IT management.

What is a Docker Container?

A Docker container is a lightweight, standalone, executable unit that contains everything an application needs to run: from code and runtime to libraries and settings.

Containers operate independently of the underlying operating system, which means they are extremely flexible and platform-independent.

Containers are not only compact but also highly efficient. They use shared resources from the host operating system, resulting in significantly less overhead compared to traditional virtual machines (VMs). This makes Docker containers ideal for modern software development, where flexibility and speed are crucial.

Start immediately with a pre-built container

Unlike a virtual machine, containers share the same kernel with the host operating system, which leads to less duplication of resources.

This means you can run multiple containers on the same machine without feeling like you’re inefficiently using your hardware. One big advantage is that you can get started with a pre-built container right away, without wasting time on configuration.

Tip

Want to learn more about how containers can contribute to your IT strategy? Check out our Managed Container Services and discover how Combell can help you with a fully managed platform.

The benefits of Docker

Tip

Another major advantage of Docker is that it is compatible with different programming languages and frameworks. This makes it a universal solution for development teams with diverse technology stacks. In practice, companies implementing Docker can guarantee faster time-to-market for their customers—a significant competitive advantage!

Why do we need Docker?

In a traditional development environment, you often encounter challenges such as inconsistency between development, test and production environments.

Resulting in lost time and errors. Docker offers a solution here by providing a uniform environment, regardless of where the container is running. Some key reasons why Docker is indispensable:

Treating Infrastructure as code

Moreover, Docker fits perfectly within the DevOps philosophy, which focuses on collaboration between developers and administrators. By treating infrastructure as code, you make processes repeatable and minimise human error.

A concrete example: suppose you are developing an e-commerce application. You can create a Docker container that contains all dependencies, such as the correct version of a database and the application code. Whether you run this container locally, on a test environment or in production, the behaviour remains the same.

Want to be completely unburdened in managing your containers? Our Managed Container Services are the ideal solution for companies looking for maximum reliability and performance.

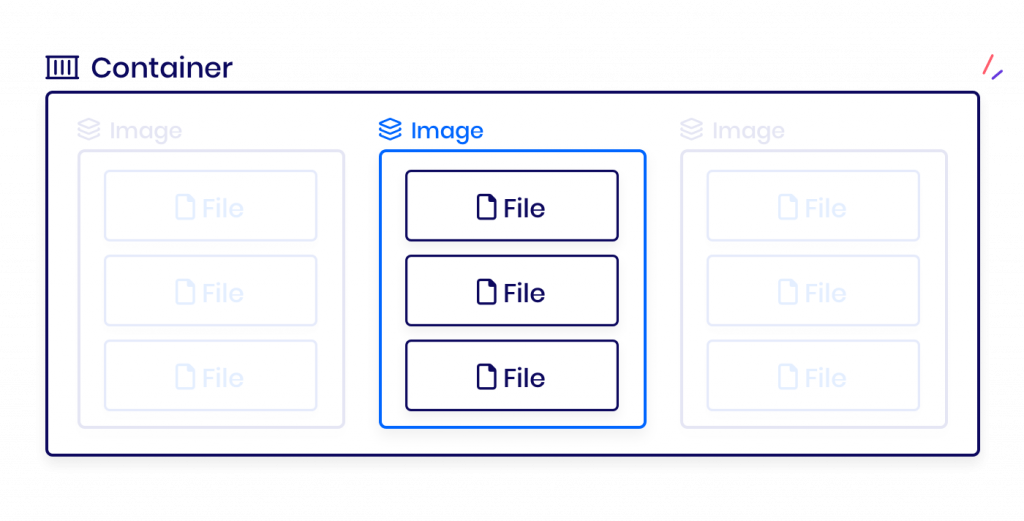

What are Docker images?

A Docker image is the building block of a container. It contains the source code of an application, along with the required libraries, dependencies, and configurations.

When you build an image, you create a complete, standalone environment that remains consistent regardless of where it is executed.

How to use a Docker image:

A key advantage of Docker images is that they can be easily shared within teams, enabling better collaboration and speeding up development processes. Additionally, Docker images can undergo version control, which is essential for larger development projects.

A development team can create a base image for a Node.js application. Every developer can use this image to get started immediately, without wasting time on configuration issues.

How does Docker work?

Docker operates on a client-server architecture composed of the following four components:

- Docker Engine: The core of Docker, responsible for building, running, and managing containers. The engine runs on the host machine and includes three key parts:

The Docker Engine REST API plays a crucial role in container management. It allows you to write automated scripts that send Docker API requests to the daemon. Thanks to this API, developers can seamlessly integrate containers into existing workflows.

- Docker Images: A Docker image is an immutable template used to create containers. Think of it as a "snapshot" of an application and all its dependencies.

- Docker Containers: Containers are created from images. They are dynamic and can be easily started or stopped.

- Docker Hub: An online repository where you can find and share Docker images. Developers can start with standard images (e.g., for Node.js or Python) and customize them to meet specific needs.

The use of Docker has led many companies to adopt new technologies more quickly and achieve more efficient workflows.

Additionally, Docker supports plugins and integrations with tools like Kubernetes, GitLab, and Jenkins, enhancing usability in complex CI/CD pipelines.

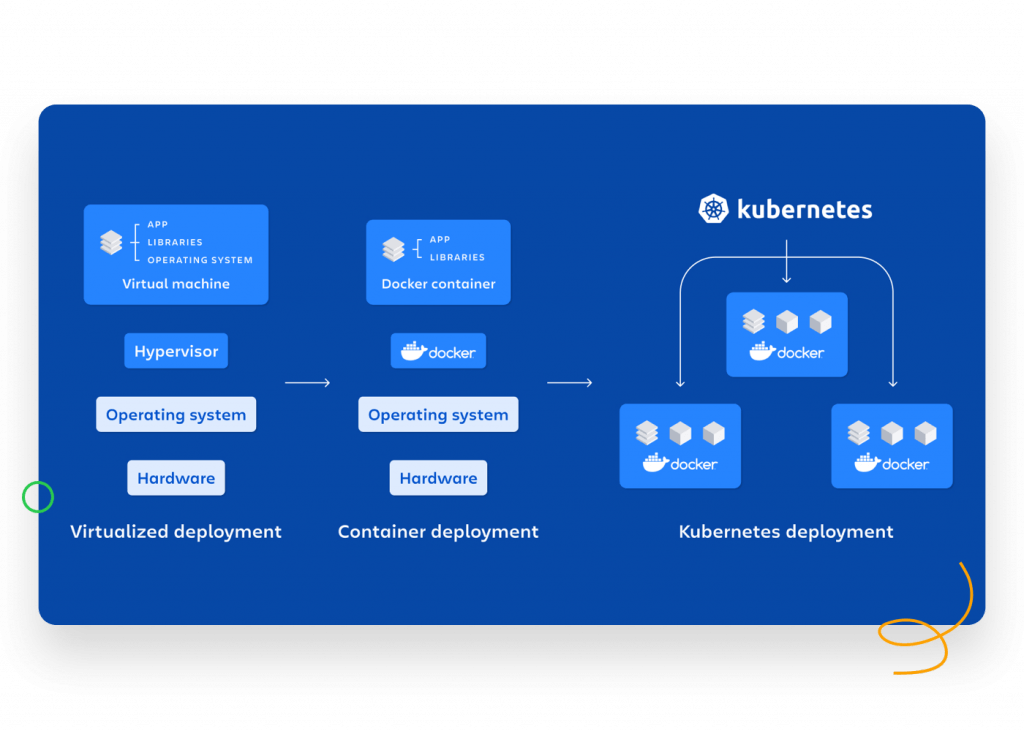

What is the difference between Docker and Kubernetes?

Docker and Kubernetes are often mentioned together, but they serve different purposes:

- Docker is a platform for creating, deploying, and managing containers.

- Kubernetes is a container orchestration platform that helps manage multiple containers at scale.

Docker is ideal for running a single container, whereas Kubernetes is necessary when managing many containers. With Kubernetes, businesses can increase their productivity and efficiency. It also offers self-healing functionality, automatically restarting failed containers

Docker Compose

Imagine you have a web application with a frontend, backend, and database. With Docker, you can create containers for each of these components, and with Docker Compose, you can easily define and manage them together in a single configuration.

Docker Compose allows you to start, stop, and link multiple containers at once so your application functions as a cohesive unit.

As your application grows and gains more users, you’ll need Kubernetes to automatically start additional containers, distribute peak loads, and restart stuck containers.

However, Docker Compose remains a handy tool for developing and testing applications on a smaller scale before deploying them to a production environment.

Kubernetes also offers advanced features like auto-scaling, self-healing, and load balancing, making it ideal for large-scale cloud-native applications. Combell even offers an entry-level Kubernetes model.

Docker in practice

"Where is Docker used?" you may ask. Docker is applied in many areas, by both individual developers and large companies. Examples include:

- Software Development: Developers use Docker to build and test applications in a consistent environment.

- Continuous Integration/Continuous Deployment (CI/CD): Docker integrates seamlessly into CI/CD pipelines, enabling faster releases.

- Cloud Environments: Cloud providers like AWS, Azure, and Google Cloud offer extensive support for Docker.

- Microservices: Docker is the perfect partner for a microservices architecture, where each service runs in its container.

A real-life example: a large e-commerce company uses Docker to build a scalable infrastructure. With containers, they can easily test new functionalities without risk of failure in their production environment.

In addition, Docker is increasingly used in education and research. Students and researchers can easily experiment with complex software configurations without the risk of (distant) failures.

Getting started with Docker

Starting with Docker is simpler than you might think. Follow these steps:

- Install Docker Desktop: Available for Windows, macOS, and Linux.

- Learn the Basics: Begin by creating a simple Docker container.

- Use Docker Hub: Download existing images to experiment with.

- Create Your Own Images: Learn how to write Dockerfiles and build custom images.

Docker offers extensive documentation and tutorials to help beginners get started quickly. Additionally, online communities are available for support.

A great starting point is running a simple web server in a container. This helps you learn the basics of Docker, such as starting and stopping containers.

What is Docker Desktop?

Docker Desktop is an all-in-one solution for developers to easily run Docker locally. It provides a user-friendly interface for managing containers, images, and settings, making it ideal for beginners.

With Docker Desktop, you can manage containers locally without needing a full operating system for each container, significantly improving efficiency.

Using Docker Desktop, you can:

Docker Desktop is available for Windows, macOS and Linux, and runs without problems on modern systems.

A key advantage of Docker Desktop is the ability to experiment locally with the same configurations used in production. This minimises problems during the transition between development and production environments. This makes it ideal for companies that want a hybrid cloud-strategy, where local and cloud environments need to work seamlessly together.

Docker: A solution for modern software development challenges

Docker is a powerful tool that has significantly improved software development and IT management. By using containers, you can develop and deploy applications quickly, consistently, and flexibly. Whether you are an individual developer or leading a large IT team, Docker offers an efficient solution to the challenges of modern software development.

Additionally, Docker is an excellent springboard to more advanced technologies like Kubernetes and cloud-native development. With its wide range of applications and benefits, Docker has had a lasting impact on how software is built and managed.

Using Docker for your development process can increase your productivity and improve the overall quality of your software.

Would you like support in managing your containers? Then check out Combell's Managed Container Services and find out what we can do for your company.